After exploring the risks of artificial intelligence — manipulation, hallucinations, loss of expertise, and accountability gaps — a practical question remains:

how can AI be used correctly without falling into the trap?

AI is unavoidable.

But misuse is not.

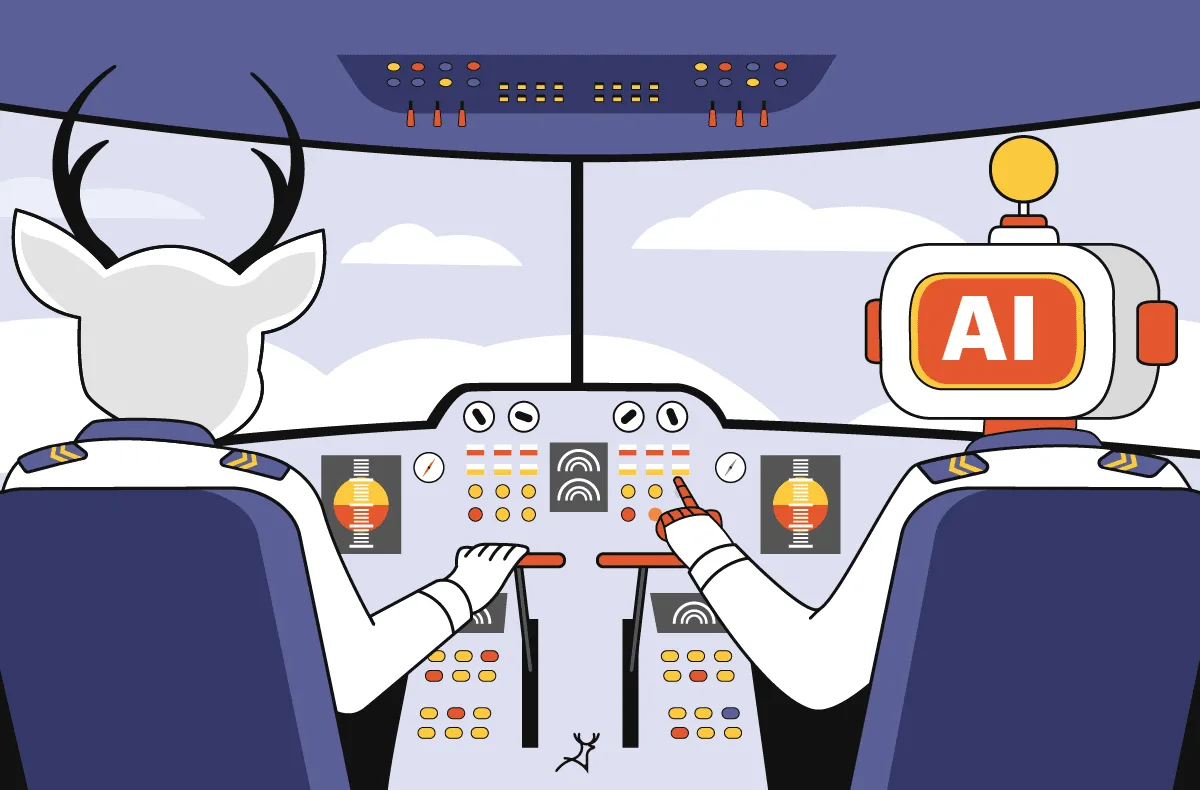

Core Principle: AI Is a Tool, Not an Authority

AI must remain:

-

an assistant

-

a support system

-

an accelerator

AI must never become:

-

a final authority

-

a substitute for judgment

-

a replacement for responsibility

This principle defines safe AI usage.

Rule 1: Use AI for Support, Not Decisions

AI excels at:

-

drafting

-

summarizing

-

idea generation

-

pattern recognition

AI must not:

-

decide outcomes

-

replace professionals

-

carry responsibility

Humans must remain decision-makers.

Rule 2: Verification Is Mandatory

Any critical AI output must be:

-

fact-checked

-

validated

-

questioned

Without verification, AI becomes an unchecked authority.

Rule 3: Do Not Use AI as a Sole Teacher

AI explains but does not:

-

recognize misunderstanding

-

correct intuition

-

build real experience

Learning requires effort, not just answers.

Rule 4: Ask for Reasoning, Not Just Results

Good AI usage focuses on:

-

explanations

-

limitations

-

alternatives

Results without reasoning create blind trust.

Rule 5: Keep Responsibility Human

If AI is involved:

-

responsibility remains human

-

accountability cannot be delegated

“There was an algorithm” is not an excuse.

Rule 6: Avoid Daily Dependency

If AI becomes mandatory for basic thinking, expertise erodes.

Healthy use means control, not reliance.

Rule 7: Define No-AI Zones

Clearly separate:

-

safe AI tasks

-

forbidden decision areas

Boundaries protect judgment.

Final Conclusion

How to use AI without falling into the trap is ultimately about discipline, not software.

👉 AI amplifies behavior.

👉 Human judgment determines direction.

Used wisely, AI is powerful.

Used blindly, AI is dangerous.

The Artificial Intelligence Trap Series:

✍️ Author: Bejenaru Alexandru Ionut – [email protected]

🔗 Internal link: https://diagnozabam.ro/sfaturi